For the first time, students can bypass the thinking math is designed to build.

A recent Electroencephalography (EEG)-based research experiment at MIT revealed decreased neural engagement when students used LLMs to assist in essay writing. The study found that beta-band connectivity—associated with active thinking and cognitive effort—peaked only when students thought independently.

Students in the AI-supported condition also showed:

- weaker memory of their own writing minutes later

- lower levels of ownership and metacognition

- a stronger tendency toward passive consumption rather than idea generation

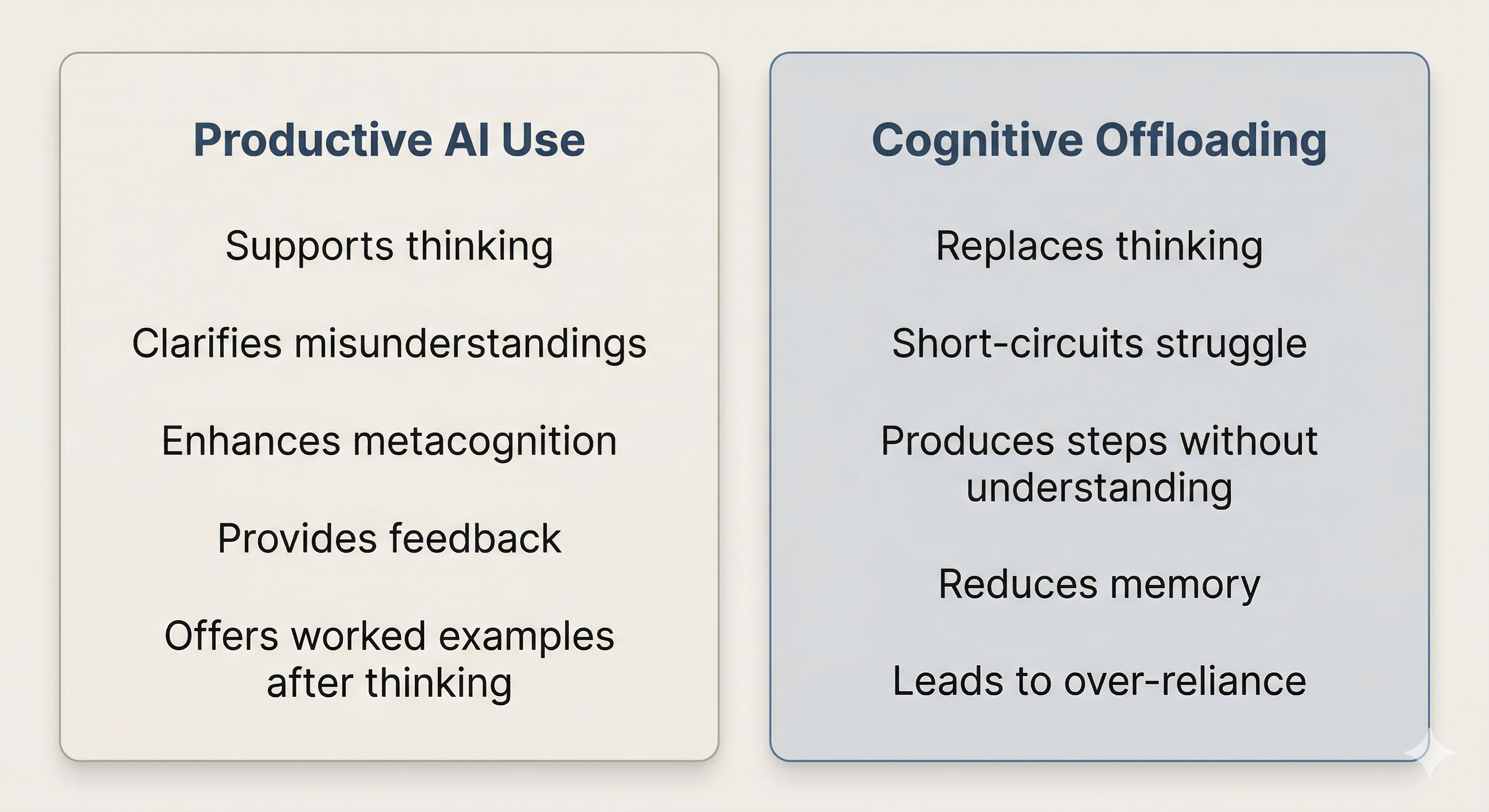

This matters because LLMs may feel helpful while reducing the very mental processes that build mathematical understanding. As the paper states, “The convenience of instant answers that LLMs provide can encourage passive consumption of information, which may lead to superficial engagement, weakened critical thinking skills, less deep understanding of the materials, and less long-term memory formation.”

While this study centers on writing, the underlying cognitive mechanisms are not domain-specific.

These findings matter in math education because critical thinking, retention, sense of ownership, problem-solving stamina, and the ability to explain one’s reasoning rely on those same neural pathways. When AI enables students to bypass the struggle phase, the neural connections of their learning are weak—if present at all.

Yet these conclusions do not suggest that LLMs should be removed from classrooms. They suggest something more important: the learning value of AI depends on how students use it.

According to the authors, “academic self-efficacy, a student's belief in their ability to effectively plan, organize, and execute academic tasks, also contributes to how LLMs are used for learning.” Students with low self-efficacy are more likely to rely on AI, especially under academic stress. In those moments, immediate solutions can take priority over the development of cognitive and creative skill.

The pattern becomes cyclical. High self-efficacy leads to more strategic AI use, which supports greater engagement, stronger critical thinking, deeper understanding, and better memory formation. The opposite is true as well.

To protect learning in the AI era, instructors must design intentionally across three dimensions: the cognitive dimension, the instructional dimension, and the social dimension.

The first—and most foundational—is the cognitive dimension, which deals with how learners manage cognitive load. Metacognition is essential. Deliverables should be designed to capture students’ metacognitive process, not just their final answers.

At a higher level, conversations about math identity, self-efficacy, and empowerment must also be surfaced. The research suggests that when students believe in themselves as mathematicians, they are more likely to use GenAI as a guiding tool rather than a cognitive offloader. Sara Strong’s Dear Math and Carol Dweck’s work on growth mindset offer strong foundations here.

When these internal conditions are present—when students are thinking about their thinking and believe themselves capable of doing so—their AI use becomes more selective, intentional, and metacognitive.

When the human side is addressed, some best practices emerge in the instructional dimension.

Students need specific prompt requirements when interacting with GenAI. For example: include at least one sense-making question, one reflection question, one applied example, and one question about assumptions, limitations, or possible errors.

Effective AI-supported activities follow a simple framework:

- Attempt-first thinking — Students engage with the problem before consulting AI.

- Guided questioning — Students use AI for clarification, critique, or application.

- Collaborative reflection — Students share findings and reasoning with peers to validate understanding.

If we want students to build reasoning skills, we also need to help them see what we see when we recognize excellence. One practical activity is asking students to reverse engineer professional or AI-generated outputs so they can identify what is structurally strong, what is shallow, and what is missing.

These guardrails support learning, but they also do something deeper: they reinforce identity. When students are required to explain, justify, and reflect, they begin to see themselves as mathematicians who can reason through complexity.

Below are structured prompt types that help protect and develop thinking.

Sense-making prompts

- Ask for step-by-step explanations rather than final answers.

- Use AI as a thought partner to explore multiple ways of thinking about or solving a problem.

Reflection prompts

Use these to build metacognition and self-regulation.

- “Here is a photo of my work and solution. Act like a supportive tutor and point out my strengths and areas for improvement based on my approach.”

- “Can you help me identify what part of the problem I misunderstood and how I can approach similar problems next time?”

- “Can you help me compare two strategies I used and decide when each is more effective?”

- “Help me turn my mistakes into a study plan for tomorrow.”

Challenge prompts

- “Here’s what I still find confusing. Ask me three questions that will help me understand it better and test my understanding.”

- “Challenge me with one follow-up question that checks whether I really understand the concept.”

- “Pretend you’re a confused classmate that I am helping. Ask me questions that help me explain my thinking more clearly.”

Application prompts

- “Can you give me a real-world situation where this concept applies and help me model it mathematically?”

- “Create a new problem that uses this concept in a different context, and let me try it before you help.”

- “How would this concept show up in a field or topic I care about?”

- “Give me a slightly more challenging version of this problem that builds on the same idea.”

- “Can you help me adapt this method to a new type of problem?”

In addition to the cognitive and instructional dimensions, the social dimension is increasingly important in the AI era. As students lean on GenAI, they can drift into an isolated learning environment shaped by immediacy, validation, and ease.

Smith and MacGregor (1992) explain that collaboration is crucial to learning because it requires students to actively explain their thinking. That act alone demands deeper understanding. When students defend an approach, question a peer’s reasoning, or revise their own explanation, they are practicing metacognition and strengthening critical thought.

Chatfield (2025) highlights another layer of the social dimension: “When students see their perspectives treated with respect and their struggles understood, engagement is liable to follow. The classroom becomes a space not just for personal achievement but for sociable sense-making” (p. 6).

Smith and MacGregor go further, arguing that collaborative learning helps sustain democracy itself. “Dialogue, deliberation, and consensus-building out of differences are strong threads in the fabric of collaborative learning, and in civic life as well” (1992, p. 2).

Collaborative activity can deepen understanding, diversify approaches, build confidence, and increase connection. It also shifts roles. Students become active pioneers of their own learning, using AI as a tool rather than a substitute. Teachers move from center stage to a more demanding role—designing, guiding, noticing, and pressing students toward ownership.

Teachers must model how to think with AI, not around it.

Because GenAI amplifies the conditions around learning, teachers must design conditions that require reasoning, reflection, and ownership.

Appendix: Designing for Thinking Classroom Applications

Below lesson plan ideas to support structured and practical GenAI implementation.

AI Mathematician

Summary: Interview a Mathematician: Discovery, Struggle, and the Birth of an Idea Learning Goals:

- Humanize Mathematics: Students understand math as a human, iterative process to improve interest and sense-making.

- Dialogic Learning: Students ask thought-provoking questions, clarify uncertainties, and challenge disagreements.

- Contextual Motivation: Students connect abstract ideas to real-world problems they were designed to solve. AI Role: Simulates a historical voice to enable dialogue, not deliver answers

Real-World Concept Application

Summary: Students investigate how a mathematical concept operates inside a real-world domain they care about.

Learning Goals:

- Deep Conceptual Understanding: Investigate how a mathematical concept operates within a real-world domain students genuinely care about.

- Guarded AI Collaboration: Practice structured AI use by asking purposeful questions, challenging outputs, and connecting ideas rather than accepting answers passively.

- Metacognitive Awareness: Develop thoughtful questions for the AI tool by clarifying uncertainties, identifying what is already understood, and determining what support will move thinking forward.

- Increased Engagement & Relevance: Experience mathematics as personally meaningful by situating concepts within interesting, authentic contexts.

- Higher-Order Thinking: Move beyond procedure to apply, analyze, evaluate, and synthesize mathematical ideas within real-world situations.

- Mathematical Self-Efficacy: Strengthen confidence by using AI strategically to support understanding. AI Role: Acts as a thought partner for exploration and extension—not a shortcut to answers